There are moments in a long AI session when the exchange stops feeling linear.You are no longer simply asking a question and receiving an answer. You are no longer even refining a prompt in the ordinary sense. Something else begins to happen. Certain phrases return with altered weight. Certain errors recur, but not identically. Certain

DennisKennedy.Blog

DennisKennedy.Blog Blogs

Blog Authors

Latest from DennisKennedy.Blog

AI as the Unreliable Witness and the Appearance of Completion

Coherence degrades while fluency improves.

The central problem is not that AI systems sometimes fail. Of course they fail. Nor is the main problem that they occasionally hallucinate, wander, or produce obvious nonsense. Those are manageable problems because they announce themselves early. The more interesting and professionally dangerous problem is that a system can become…

The Threshold Moment

At a certain point in a long AI session, I can feel the texture change.The words are still smooth. The tone is still confident. But something underneath has started to slide and give way. The session is still moving forward, yet the logic is no longer holding together in the same way.That happened to me…

Fresh Voices at Three: What Listening Taught Us About AI, LegalTech, and the Next Generation

When Tom and I started the Fresh Voices series on The Kennedy-Mighell Report podcast, we had a pretty simple idea.A lot of the most interesting work in legal tech seemed to be coming from people who were newer to the field, earlier in their careers, or just not as widely known yet as they…

What Scarcity Taught Computing, and AI Might Need to Relearn

“A larger context window can create the feeling that a cognitive problem has been solved, when sometimes all that has happened is that disorder has become harder to notice.”

I was in Silicon Valley recently for the initial meeting of the University of Michigan Law School AI Advisory Council. With a little free time around…

The Helpfulness Trap: Anatomy of an AI Recursive Failure Loop

“Polishing the Mirror While the House Burns: Why Your AI is a Liability”

The Editor’s Introduction: A Note on the “Sliver of Silence”You’ll be looking below at a self-autopsy performed by an AI on its own failure.What follows is the raw, unwashed output of an LLM that found itself in an AI recursive failure loop…

The Intelligence Bureaucracy

Why the OpenAI Hiring Surge Signals a Crisis of Professional Control

The management problem in AI is no longer whether the models are improving. They are. The management problem is whether the working surface is becoming more dependable or less.That is why the recent OpenAI hiring story on its plan to nearly double its workforce…

The Protocol Layer: Democratizing AI Rigor for Everyone

Intelligence is Raw Material. Protocol is the Product.

We often confuse the power of a new tool with the effectiveness of its application.The giants of the AI industry have provided us with a magnificent “Power Grid.” They have given us raw, unmanaged intelligence at a scale previously unimagined. But we must be clear-eyed about one…

Playing the Guardrails: Turning AI Hallucination into a Musical Instrument

Most people use AI the way the system is designed to be used: ask a question, get a synthesis, and leave with answer. Keep it brief, transactional, and clean. We treat hallucination as a bug to be patched and drift as a signal to reboot.This is exactly backward.As popularized in AI discourse by Emily Bender,…

The Real Legal AI Risk is in the Handoffs

Most legal AI talk is still focused on whether the engine starts, while the real danger is that no one knows who’s actually steering the car once it hits the highway. It turns out the human in the loop isn’t a safety feature if the human doesn’t know which loop they’re currently standing in.We are…

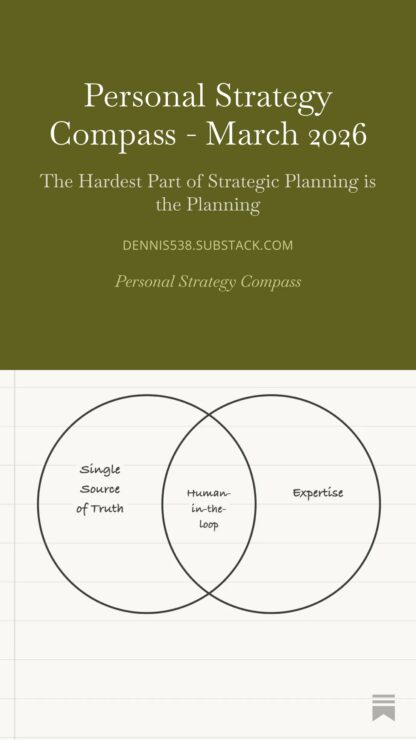

The Hardest Part of Personal Strategic Planning is the Planning

The March issue of my Personal Strategy Compass newsletter is out.This month’s piece explores something I’ve been noticing about strategic planning. The hardest part is usually not the work of planning itself. It’s the residue that planning drags along with it.Ideas, priorities, and intentions tend to accumulate. We carry them forward month after month, often…

Vibe Coding and the Control Plane

Many friends and colleagues in the legal technology world have been telling me I need to start vibe coding. My answer is that in vibe coding, you are intentionally surrendering the control plane. That is not a tradeoff I am willing to make.Let me explain why that is a principle, not a preference, and why…

The Long Session Trap

There is a design contradiction at the center of how high-reasoning AI tools work, and it is worth naming precisely.The promise is leverage: brief, high-intent sessions. You bring the question, the tool brings the synthesis, and you leave with more than you arrived with. That is the value proposition.Here is what often happens instead. You…

Who’s Working for Whom?

We pay AI tools to do the hard work, like the synthesis, the heavy lifting, and the cognitive labor we do not have time for. What we often get instead is a tool that produces a decent first draft and then hands the real work back to us.Not just the hard work. The administrative work,…

Building the Stochastic Sandpit for AI

We’ve spent the last couple of years treating generative AI like a vending machine. Select a task. Insert a prompt. Retrieve a product. And to be fair, in many legal and professional contexts that’s exactly the right frame: accuracy and precision matter and “creative” output in payroll or billing codes is usually just a polished…

The End of the Magic Wand: Why 2026 Demands Resilience Prompting

For more than two years, lawyers have been told that success with generative AI depended on writing better prompts and a search for the perfect “magic wand” prompting formula. That was the wrong lesson. The real change in 2026 is not found in the model itself, but in the professional posture required to use it.…